Introduction

GPT models are remarkable and can do a lot of things. From writing code to translating texts to generating new content. I personally use GPT models to generate texts from bullet points very very frequently. I no longer write emails myself, but collect the important parts of it in bullet points and then tell GPT to write a nice email. Done.

But how far can we take that approach? And what does that mean for journalists and online content?

An Automated Publication Platform

To that end I created an automated publication system that looks like a newsfeed. But that newsfeed is completely AI-generated. The system crawls data, generates articles and publishes them.

The interesting thing is that the system can also emulate the style of authors. Not only their writing style, but also personal preferences (left-wing, right-wing etc). This makes articles look very human.

Observations

The generated articles are grammatically correct. The human touch of the authors makes them very hard to distinguish from human-written content. One AI author writes even in Bavarian dialect. Funny and very human.

But sometimes the text generation goes wrong (strange formatting, or simply the sentences being created). I could so far not find an instance of hallucination - but I can’t rule it out. This suggests that human intervention is still beneficial.

I think that these AI-based publication pipelines will change the way journalists work. It will change the way content is created. From simple “writing the text” - to “working on the text” - jointly with the AI.

While the current iteration of the AI-Newsfeed is based on GPT 3.5, my empirical evidence indicates that OpenAI’s GPT 4.0 offers substantial improvements.

Implications for Society

The emergence of AI-generated content inevitably raises questions about its impact on society. A lot of AI-generated content will be generated.

But in my opinion that is NOT so much different from the state of affairs right now. There’s already a lot of content out there that simply exists to trick people to click on links or to trick search engines to rank pages more highly. AI content is in no way different to that.

But this also means that original content (Economist, NZZ) will stand out. People can tell apart good content from purely AI-generated crappy content. Good content makes a difference. And good research on a topic cannot be done with AI alone.

Next Steps for AI-Newsfeed

Running AI-Newsfeed is not expensive, but also not cheap. For me it serves as a great platform to explore the limits of the technology. It’s really fun to build it.

Potential next steps are:

- Internationalization to English content and English AI authors. Right now it’s only German.

- Switch to GPT 4.0 for content creation. It seems to be way better (anecdotal evidence).

- Create articles on the same base information by different AI authors. How cool would it be to compare an left-wing AI author and right-wing AI author’s writing?

- Allows users to supply content and generate articles themselves. This would be a step into a AI pipeline usable by journalists.

Conclusion

AI-generated content is an opportunity and not a danger.

We have to deal with crappy content already now - and AI won’t change that. Good content will always stand out. And this is important! The best journalists will use AI to help them write their articles. AI will never be able to replace great journalistic research on current topics.

More

- AI-Newsfeed: https://ai-newsfeed.r10r.com

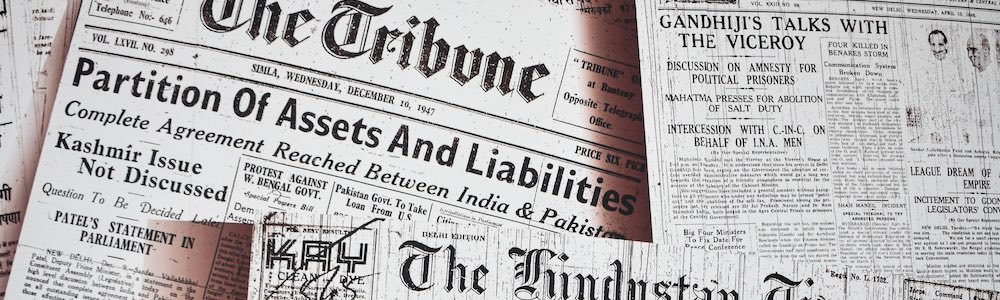

- Image on top by the awesome Rishabh Sharma